Issue #54 - K-Nearest Neighbors

💊 Pill of the week

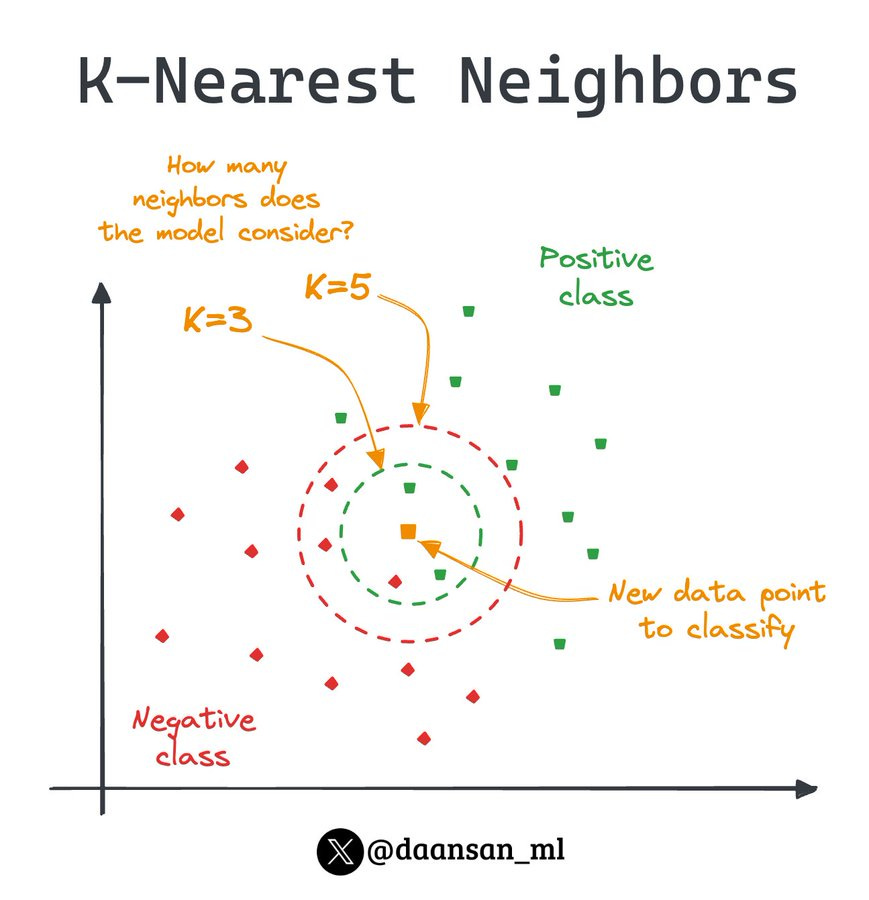

K-Nearest Neighbors (KNN) is a simple yet powerful supervised learning algorithm that has been widely used in various machine learning tasks, including classification and regression problems. Unlike many other machine learning algorithms that require a training phase to learn complex models, KNN takes a more intuitive approach by relying on the proximity of data points to make predictions.

How does it work?

The core idea behind KNN is to classify or predict a new data point based on the characteristics of its nearest neighbors in the feature space. The algorithm works as follows:

Data Representation: KNN assumes that the input data is represented as points in a multi-dimensional feature space, where each dimension corresponds to a feature.

Neighbor Identification: For a given new data point, KNN identifies the K closest (most similar) data points from the training set, based on a chosen distance metric (e.g., Euclidean distance, Manhattan distance).

Prediction: The algorithm then makes a prediction for the new data point based on the labels (for classification) or values (for regression) of its K nearest neighbors. For classification, the most common label among the K neighbors is assigned to the new data point. For regression, the prediction is typically the average or weighted average of the values of the K neighbors.

The choice of the value of K, the distance metric, and the weighting scheme (if used) can significantly impact the performance of the KNN algorithm. These hyperparameters are often tuned using techniques like cross-validation to find the optimal configuration for a given problem.

You can also have a look at my Twitter thread about the topic:

KNN is a lazy learner

In machine learning, a lazy learner refers to an algorithm that doesn't build a model from the training data but instead memorizes the data in some form and waits until it receives new data to make predictions or decisions. These algorithms postpone the generalization phase until new data is available. They are also called instance-based learners.

The KNN algorithm is a classic example of a lazy learner:

Instead of learning a discriminative function from the training data, KNN simply stores instances of the training data and classifies new instances based on a similarity measure (usually distance) to the existing instances.

When a prediction is needed for a new data point, KNN searches through the entire training dataset to find the k-nearest neighbors and assigns the majority label among them (in classification tasks) or computes a weighted average (in regression tasks) to make a prediction.

KNN's laziness means that it doesn't require an explicit training phase, as it doesn't build a model. This can make it computationally expensive during prediction time, especially with large datasets, as it needs to compare the new instance with every instance in the training set.

When to Use it?

KNN is particularly well-suited for the following scenarios:

Simple and Intuitive: it is a straightforward algorithm that is easy to understand and implement, making it a good choice for beginners or for quickly prototyping machine learning solutions.

Non-linear Relationships: it can effectively capture non-linear relationships in the data, as it does not make any assumptions about the underlying data distribution or the relationship between the features and the target variable.

Small to Medium-Sized Datasets: it performs well with small to medium-sized datasets, as it does not require a complex training phase like many other algorithms.

Multi-class Classification: it can naturally handle multi-class classification problems, where the target variable has more than two possible values.

Pros and Cons

Pros:

Simple and intuitive algorithm that is easy to understand and implement.

Effective in capturing non-linear relationships in the data.

Performs well with small to medium-sized datasets.

Suitable for multi-class classification problems.

Cons:

Can be computationally expensive for large datasets, as it requires calculating the distance between the new data point and all the training data points.

Sensitive to the choice of the distance metric and the value of K, which need to be carefully tuned.

May not perform as well as more advanced algorithms in high-dimensional feature spaces or with highly complex decision boundaries.

Python Implementation

Here's a basic example of using KNN for classification using the scikit-learn library:

from sklearn.neighbors import KNeighborsClassifier

# Create the KNN classifier

model = KNeighborsClassifier(n_neighbors=5)

# Train the model

model.fit(X_train, y_train)

# Predict using the trained model

predictions = model.predict(X_test)In this example, we use the KNeighborsClassifier class from the scikit-learn library to create the KNN classifier. We set the number of neighbors (n_neighbors) to 5, but this is a hyperparameter that can be tuned. We then train the model on the training data and make predictions on the test data.

For regression tasks, you can use the KNeighborsRegressor class instead:

from sklearn.neighbors import KNeighborsRegressor

# Create the KNN regressor

model = KNeighborsRegressor(n_neighbors=5)

# Train the model

model.fit(X_train, y_train)

# Make predictions on the test set

y_pred = model.predict(X_test)Interpreting the Results

The key outputs from a KNN model that can be interpreted are:

Nearest Neighbors: The K data points that are closest to the new data point, which can provide insights into the similarities and differences between the new data point and the training data.

Distance Metrics: The distance metrics used to identify the nearest neighbors, such as Euclidean or Manhattan distance, can reveal the relative importance of different features in the decision-making process.

Class Probabilities: For classification tasks, the algorithm can often provide the probabilities of the new data point belonging to each class, which can be used to assess the confidence in the predictions.

You can extract these outputs from your trained model like this:

# Get the indices and distances of the nearest neighbors

neighbor_indices, distances = model.kneighbors(X_test, return_distance=True)

# Get the class probabilities for the new data points

class_probabilities = model.predict_proba(X_test)By analyzing these outputs, you can gain a better understanding of the decision-making process of the KNN model and the underlying relationships in the data.

In conclusion, K-Nearest Neighbors is a versatile and easy-to-understand machine learning algorithm that can be a valuable tool in the data scientist's toolkit. Its simplicity, ability to capture non-linear relationships, and suitability for small to medium-sized datasets make it a great choice for a variety of applications.

🎓Advanced Machine Learning*

Have you outgrown introductory courses? Ready for a deeper dive?

Explore feature engineering and feature selection methods

Discover tactics for optimizing hyperparameters and addressing imbalanced data

Master fundamental machine learning methods and their Python application

Enroll today and take the next step in mastering the world of data science!

*Sponsored: by purchasing any of their courses you would also be supporting MLPills.

🤖 Tech Round-Up

No time to check the news this week?

This week's TechRoundUp comes full of AI news. From Meta's AI Chatbots to the Spotify's new feature, the future is zooming towards us!

Let's dive into the latest Tech highlights you probably shouldn’t this week 💥

1️⃣ WhatsApp is testing a Meta AI chatbot in India and Africa

This is part of Meta's plan to expand its AI services 🌍

Are you excited to see more AI integration in your messaging apps? 🤔

2️⃣ Tired of your smartphone? 🤔

A new wearable called the Ai Pin is here!

This $699 device uses a projector and AI assistant to let you complete tasks hands-free.

Sounds futuristic, but... is it worth the price tag? 💸

3️⃣ Big news for photo lovers!

Google Photos is getting awesome new AI-powered editing tools! ✨

These include Magic Eraser, Photo Unblur, and Magic Editor, which lets you change backgrounds and remove objects.

No more need for fancy editing software! 📸

4️⃣ Meta is catching up in the AI race with its new custom AI chip, the MTIA!

This powerful chip is designed to run and train AI models faster 💪

Will it help Meta compete with Google and Amazon in the world of AI? 🤔

5️⃣ Spotify is rolling out a new AI-powered playlist feature! 🎧

You'll be able to ask for playlists based on mood, genre, or even color!

This feature is still in testing, but it sounds like a cool way to personalize your listening experience. 😎