Issue #55 - Vector Databases and their importance

Today we are introducing a new type of issue: “Podcast notes” 🎉

Here we will select a technical podcast about a relevant topic and share the main takeaways and complement that with additional information. I hope you enjoy!

💊 Pill of the week

This week’s podcast is Intro to Vector Databases by The Cloudcast, in which Jeff Huber (Founder/CEO of Chroma DB) talks about vector databases, data integration, RAG, Embedded models, and AI integration.

What is a Vector Database?

A vector database is a database system designed to store and search high-dimensional vector representations of data, such as text embeddings produced by large language models. It allows for efficient nearest neighbor search and retrieval based on semantic similarity.

The key innovation is the use of vector embeddings, where data like text is converted into a dense vector representation that captures semantic meaning. Similar concepts are mapped to nearby points in the high-dimensional vector space, enabling fuzzy matching and retrieval based on conceptual relevance rather than just keyword matching.

More details about Vector Databases here:

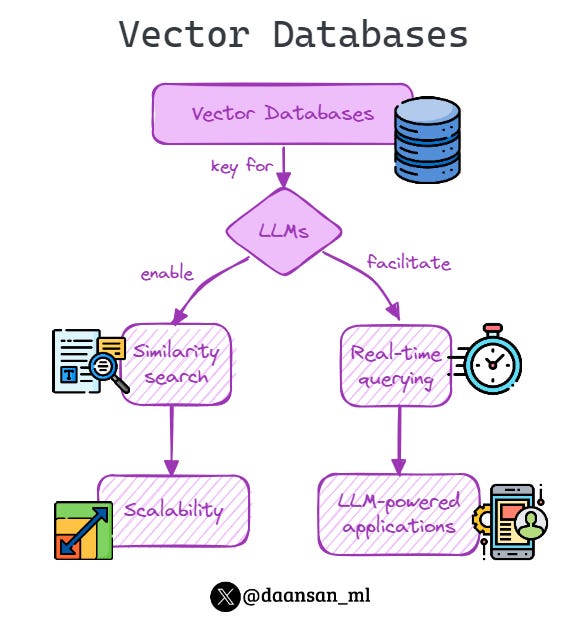

Why Vector Databases are important for AI

Vector databases play a crucial role in integrating large AI models, especially large language models (LLMs), with specific datasets and domain knowledge. They bridge the gap between these powerful but general AI models and an organization's proprietary data.

For example, while models like ChatGPT have broad knowledge, they don't automatically know details like a company's HR policy. A vector database allows semantically relevant docs from the company knowledge base to be retrieved and provided to the LLM as context, enabling it to then synthesize an accurate response grounded in the company's specific data.

📝 Before continuing, let’s see more in detail what RAG is…

RAG stands for Retrieval-Augmented Generation. It refers to the technique of using a retrieval system, like a vector database, to first retrieve relevant pieces of information or context documents, and then providing those retrieved contexts as additional inputs to a language model during the generation process.

The key steps in a RAG pipeline are:

Take the user's input query or prompt

Encode the query into a vector embedding

Use that query embedding to retrieve the nearest neighbor context embeddings from a vector database

Pass the retrieved context embeddings, along with the original query, into a language model

The language model generates an output that is conditioned on both the query and the retrieved context information

So in essence, RAG allows you to augment or enhance the generative capabilities of a language model by first retrieving pertinent supporting context or data from a vector database. This helps ground the language model's response in factual information relevant to the query, preventing hallucinations or nonsensical outputs.

This retrieval-augmented generation (RAG) workflow of retrieving relevant data chunks from a vector database and using them as context for an LLM has become extremely popular for building AI applications that combine the capabilities of large models with data privacy and domain-specificity.

Foundational Models and Integration

Vector databases are a pluggable component that can be used with any embedding model that produces vector representations, as well as any language model that accepts conditional input. They work seamlessly with both open-source models like LLaMA, MPT, and closed models like GPT-4.

The key integration point is simply the context window - the input window where relevant retrieved chunks from the vector database are provided to the language model as additional context information.

What is a context window and why could this be a problem?

The context window imposes a key constraint and bottleneck when using retrieval-augmented generation (RAG) with large language models.

Most large language models have a maximum context length limitation on the number of tokens (words/subwords) that can be processed as input for generation.

This context window size limits how much retrieved context information from the vector database can be included alongside the original query/prompt.

If the total context size exceeds the model's window, the context has to be truncated or split across multiple forward passes, which can negatively impact performance and coherence.

While vector databases don't require model fine-tuning, they can be combined with fine-tuning approaches. Fine-tuning changes how the model thinks by updating its parameters on domain data, while vector retrieval provides what for the model to think about by surfacing relevant context documents.

RAG vs Fine-Tuning Tradeoffs

Jeff Huber, co-founder of Chroma (a popular vector database), outlines the tradeoffs:

For most current practical/business use cases (~80-90%), RAG with no model fine-tuning is the recommended approach. Fine-tuning large models to memorize factual knowledge is very difficult, so retrieving relevant context data from a vector database works better.

RAG with no model fine-tuning is the recommended approach in the majority of use cases (~80-90%)

Fine-tuning today is mainly used for learning "how to think" in a very specialized domain language, like legal or medical jargon. Retrieval is still important for providing "what to think about" context.

However, in the future more applications may use both fine-tuning and retrieval. Fine-tuning will allow using smaller, cheaper models customized for a task. But retrieval will still provide grounded factual context.

The decision depends on the application - there is no universal "either/or", as fine-tuning and retrieval solve complementary issues. But for many current real-world deployments, efficient vector retrieval without fine-tuning is a powerful and accessible approach.

🎓Learn Real-World Machine Learning!*

Do you want to learn Real-World Machine Learning?

Data Science doesn’t finish with the model training… There is much more!

Here you will learn how to deploy and maintain your models, so they can be used in a Real-World environment:

Elevate your ML skills with "Real-World ML Tutorial & Community"! 🚀

Business to ML: Turn real business challenges into ML solutions.

Data Mastery: Craft perfect ML-ready data with Python.

Train Like a Pro: Boost your models for peak performance.

Deploy with Confidence: Master MLOps for real-world impact.

🎁 Special Offer: Use "MASSIVE50" for 50% off.

*Sponsored

🤖 Tech Round-Up

No time to check the news this week?

This week's TechRoundUp comes full of AI news. From Meta's Llama3 to the Google's all-in on AI!

Let's dive into the latest Tech highlights you probably shouldn’t this week 💥

Meta has unveiled Llama 3, claiming it's one of the best open models out there.

This new generative AI model aims to enhance how we interact with machine learning across various applications.

2️⃣ Hugging Face's New AI Benchmark

Hugging Face introduces a new benchmark for evaluating generative AI in health-related tasks.

This could revolutionize how AI supports decision-making in healthcare, offering more accurate and reliable tools. 🏥

LinkedIn's latest update boosts visibility for Premium company pages, offering deeper insights into who's checking out your business.

This could be a game-changer for B2B engagement on the platform. 📈

4️⃣ Intel's Open AI Commitment

Intel is stepping up with other tech giants to commit to building open generative AI tools for enterprise use.

This collaborative effort underscores the industry's move towards more transparent and accessible AI solutions.

Google is going all-in on generative AI at its Google Cloud Next event to shape the future of cloud.

They're integrating AI deeply into their cloud offerings, signaling a major shift in how businesses will use cloud computing. ☁️