💊 Pill of the week

Retrieval-Augmented Generation (RAG) is an approach that allows large language models to leverage external knowledge sources by tightly integrating information retrieval and natural language generation capabilities.

Let’s learn more about it!

What is Retrieval-Augmented Generation (RAG)?

As large language models like GPT-4 and LLaMa become more capable at understanding and generating human-like text, a key challenge is grounding their responses in factual, up-to-date external knowledge beyond just what's present in their training data. This is where the Retrieval-Augmented Generation (RAG) framework comes in.

RAG is an approach that tightly integrates large language models with customizable knowledge bases containing documents, databases, websites and other data sources relevant to a particular domain or use case.

RAG allows AI systems to dynamically retrieve and synthesize information from these external sources into their generated outputs.

RAG function and use cases

The core function of RAG is to bridge the gap between the powerful general language understanding capabilities of large language models and the wealth of real-world knowledge locked away in textual data sources. This unlocks applications where AI needs to provide accurate, relevant and substantive responses grounded in constantly evolving external information.

Some key use cases where RAG can be applied include:

Domain-specific question answering (e.g. customer support, healthcare, legal)

Querying and explaining structured data like databases and spreadsheets

Assistants that can reference and reason over technical documentation

Creative writing assisted by accessing relevant background knowledge

Building AI agents that can engage in multi-turn dialogues informed by latest information

How does RAG work?

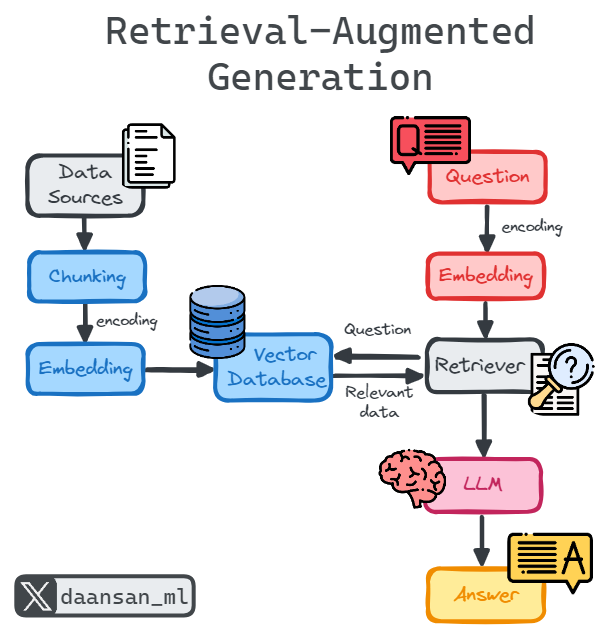

At its core, RAG follows a multi-step retrieval and generation process:

Data Collection & Preparation

The first step is curating the external data sources relevant for the target use case - documentation, databases, websites, research papers etc. This unstructured data is then cleaned, processed into text format, and optionally filtered based on quality, recency, domain relevance etc.

Data Chunking

Instead of storing documents as monolithic blocks, RAG employs semantic data chunking to break them down into topic-focused passages or chunks. This allows later stages to pinpoint and retrieve only the most pertinent chunks rather than full documents, improving efficiency and relevance.

Document Embeddings

Using transformer-based encoders, each text chunk gets converted into a numeric vector embedding capturing its semantic meaning. This high-dimensional representation allows matching queries to relevant chunks based on conceptual similarity rather than just lexical overlap.

Question Encoding & Retrieval

When a user submits a question, the same embedding model encodes it into a vector representation. Using efficient vector similarity search, the most relevant document chunks are retrieved by finding closest embedding neighbors to the query.

Language Model Integration

The retrieved context chunks and original query are formatted as a prompt and passed to a LLM like GPT-4 or LLaMa. The language model attends over this combined context to generate a relevant, coherent and up-to-date response drawing insights from the external knowledge.

Compared to traditional rigid information retrieval, RAG's neural approach allows language models to synthesize and reason over retrieved knowledge in sophisticated ways, incorporating it into generated outputs rather than simply lookup and return passages verbatim.

🎓Advanced Machine Learning*

Have you outgrown introductory courses? Ready for a deeper dive?

Explore feature engineering and feature selection methods

Discover tactics for optimizing hyperparameters and addressing imbalanced data

Master fundamental machine learning methods and their Python application

Enroll today and take the next step in mastering the world of data science!

*Sponsored: by purchasing any of their courses you would also be supporting MLPills.

🤖 Tech Round-Up

No time to check the news this week?

This week's TechRoundUp comes full of AI news. From the rise of vector databases to Nvidia’s acquisition of RunAI!

Let's dive into the latest Tech highlights you probably shouldn’t this week 💥

1️⃣ The Rise of Vector Databases

As AI grows, so does the need for databases that handle unstructured data like images & texts.

Companies like Qdrant are at the forefront, raising $28M to enhance data processing with vector embeddings.

Speaking of funding, xAI, Elon Musk’s OpenAI rival, is nearing a $6B milestone with its investor X!

Big news for the AI community as they push boundaries in generative AI. 🤖

3️⃣ Meet the Future of Robotics

Sanctuary's latest humanoid robot learns faster and costs less, setting a new standard in robotics.

It's not just about making robots but evolving them to be more accessible and efficient.

OpenAI isn’t slowing down either, quietly raising $15M for its startup fund.

This move signals continued growth and interest in nurturing innovative AI-driven projects. 💸

5️⃣ Nvidia Expands AI Capabilities with Strategic Acquisition of RunAI

Nvidia’s strategic acquisition of AI workload management startup RunAI points to a strengthening of their AI infrastructure.

This could change how we manage AI workloads efficiently!

Its really easy to understand and content is too good...🙌👍👌

nice explaining, short and descriptive