Issue #57 - Evaluating ARIMA Performance

💊 Pill of the week

Time to go back to Time Series with ARIMA. Let’s continue where we left it last time. If you want a reminder here you have:

Today is time to learn how to evaluate the performance of your ARIMA model:

Evaluating ARIMA Performance

When working with time series data and forecasting models like ARIMA, it is essential to evaluate the performance of an ARIMA model to ensure its reliability and accuracy. Evaluating a model's performance not only provides insights into its strengths and weaknesses but also allows for comparisons between different model specifications or alternative forecasting approaches. By assessing various performance metrics, analysts can make informed decisions about which model best fits their data and objectives, ultimately leading to more accurate and trustworthy forecasts.

The evaluation process typically involves the following steps:

The first one consists of splitting the available data into two parts: a training set and a test set (or a hold-out set).

The ARIMA model is first trained, or fitted, on the training set, which involves estimating the model's parameters based on the historical data.

Once the model is trained, its performance is evaluated by comparing its predictions on the test set against the actual observed values.

This approach simulates a real-world scenario where the model is used to forecast future values based on past data.

Several metrics can be employed to quantify the performance of an ARIMA model, each with its own strengths, weaknesses, and underlying assumptions. In this article, we will explore four commonly used metrics:

Mean Squared Error (MSE)

Root Mean Squared Error (RMSE)

Mean Absolute Error (MAE)

Mean Absolute Percentage Error (MAPE).

These metrics provide different perspectives on the model's accuracy, allowing for a comprehensive evaluation of its performance.

By carefully considering the characteristics of the time series data, the nature of the forecasting problem, and the specific objectives of the analysis, analysts can choose the most appropriate metric(s) to evaluate their ARIMA model's performance. Additionally, using a combination of metrics can provide a more holistic assessment, capturing different aspects of the model's accuracy and reliability.

Ultimately, the evaluation process is crucial for ensuring the validity and trustworthiness of ARIMA model forecasts, which can have significant implications in decision-making processes across various domains.

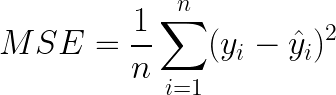

Mean Squared Error (MSE)

The Mean Squared Error (MSE) is a widely used measure for evaluating the performance of forecasting models. It calculates the average squared difference between the observed values and the predicted values from the ARIMA model.

The MSE is computed as:

Where:

n is the number of observations

yᵢ is the observed value

ŷᵢ is the predicted value from the ARIMA model

Here you have the implementation in Python:

import pandas as pd

y_true = pd.Series([...]) # Observed values

y_pred = pd.Series([...]) # Predicted values from ARIMA model

mse = ((y_true - y_pred)**2).mean()Lower values of MSE indicate better model performance, as they represent smaller deviations between the observed and predicted values. However, the MSE is sensitive to outliers, as squaring the errors can amplify the impact of large deviations.

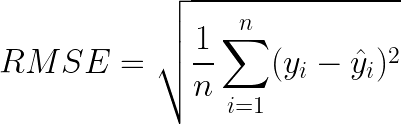

Root Mean Squared Error (RMSE)

The Root Mean Squared Error (RMSE) is the square root of the MSE, providing an interpretation in the same units as the original data.

The RMSE is computed as:

Here you have the implementation in Python:

import pandas as pd

import numpy as np

y_true = pd.Series([...]) # Observed values

y_pred = pd.Series([...]) # Predicted values from ARIMA model

rmse = np.sqrt(((y_true - y_pred)**2).mean())Lower values of RMSE indicate better model performance, similar to the MSE. The RMSE is also sensitive to outliers due to the squaring of errors.

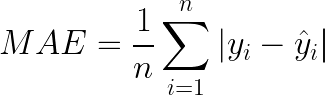

Mean Absolute Error (MAE)

The Mean Absolute Error (MAE) calculates the average absolute difference between the observed values and the predicted values from the ARIMA model.

The MAE is computed as:

Here you have the implementation in Python:

import pandas as pd

y_true = pd.Series([...]) # Observed values

y_pred = pd.Series([...]) # Predicted values from ARIMA model

mae = (y_true - y_pred).abs().mean()Similar to MSE and RMSE, lower values of MAE indicate better model performance. However, unlike MSE and RMSE, the MAE is less sensitive to outliers since it does not square the errors.

Mean Absolute Percentage Error (MAPE)

The Mean Absolute Percentage Error (MAPE) is particularly useful when dealing with time series data with different scales or magnitudes. It expresses the average absolute percentage error between the observed and predicted values.

The MAPE is computed as:

Here you have the implementation in Python:

import pandas as pd

import numpy as np

y_true = pd.Series([...]) # Observed values

y_pred = pd.Series([...]) # Predicted values from ARIMA model

mape = np.mean(np.abs((y_true - y_pred) / y_true)) * 100The MAPE is expressed as a percentage, with lower values indicating better model performance. However, it is important to note that the MAPE can be sensitive to small observed values near zero, as the denominator in the calculation can become very small, leading to large percentage errors.

Considerations and Trade-offs

The choice of evaluation metric(s) for an ARIMA model depends on several factors:

Characteristics of the time series data

The nature of the forecasting problem

Specific objectives of the analysis

Each metric has its own strengths, weaknesses, and underlying assumptions, making it important to carefully consider the appropriateness of each metric for a given use case:

The MSE and RMSE are widely used due to their simplicity and interpretability, but they are sensitive to outliers and scale-dependent.

The MAE is a robust alternative, as it is less sensitive to outliers, but it is still scale-dependent.

The MAPE is particularly useful when working with time series data that have different scales or magnitudes, as it is scale-invariant. However, the MAPE has a significant limitation: it is undefined or can produce misleading results when the observed values are close to zero or contain zeros.

In practice, a combination of these metrics is often used to comprehensively assess the performance of an ARIMA model. For example, the RMSE or MAE can be used along with the MAPE to capture different aspects of model performance.

The choice of evaluation metric(s) should be guided by the specific characteristics of the time series data, the forecasting problem at hand, and the objectives of the analysis.

If the time series data does not contain outliers and the scale of the data is consistent, the MSE or RMSE can be suitable choices.

If the time series data contains occasional extreme values or outliers, the MAE may be a more appropriate choice.

If the time series data has different scales or magnitudes, and the observed values are not close to zero, the MAPE can be a useful metric for comparing model performance across different time series.

By carefully considering the strengths and limitations of each metric, and by using a combination of metrics when appropriate, analysts can ensure a comprehensive evaluation of their ARIMA model's performance, leading to more reliable and accurate forecasts.

🎓Learn Real-World Machine Learning!*

Do you want to learn Real-World Machine Learning?

Data Science doesn’t finish with the model training… There is much more!

Here you will learn how to deploy and maintain your models, so they can be used in a Real-World environment:

Elevate your ML skills with "Real-World ML Tutorial & Community"! 🚀

Business to ML: Turn real business challenges into ML solutions.

Data Mastery: Craft perfect ML-ready data with Python.

Train Like a Pro: Boost your models for peak performance.

Deploy with Confidence: Master MLOps for real-world impact.

🎁 Special Offer: Use "MASSIVE50" for 50% off.

*Sponsored

🤖 Tech Round-Up

No time to check the news this week?

This week's TechRoundUp comes full of AI news. From Anthropic new launch to Airbnb new AI-based feature!

Let's dive into the latest Tech highlights you probably shouldn’t this week 💥

Anthropic is making moves with a new premium AI plan aimed at businesses, stepping up the competition in the AI space.

Big news for companies looking to leverage cutting-edge AI tools! 🌐

GitHub's Copilot Workspace is revolutionizing software engineering with AI.

It's like having a co-pilot for coding, making software development smoother and more intuitive. Developers, take note! 🛠️💻

Apple's latest earnings reveal a deep dive into AI, promising a new era of smart tech.

Expect more personalized and seamless experiences across Apple devices. 🍏🔍

'X' now offers 'Stories on X', summarizing news via Grok AI.

This tool provides quick, digestible news updates, a game-changer for staying informed on the go! 📰🤖

5️⃣ Airbnb's AI-Enhanced Booking

Airbnb's new group booking feature taps into AI to enhance customer service, making group travel planning a breeze.

Say goodbye to booking hassles! 🏠✈️